Stanford CS230 | Autumn 2025 | Lecture 1: Introduction to Deep Learning

BeginnerKey Summary

- •This lecture kicks off Stanford CS230 and explains what deep learning is: a kind of machine learning that uses multi-layer neural networks to learn complex patterns. Andrew Ng highlights its strength in understanding images, language, and speech by learning layered features like edges, textures, and objects. The message is that deep learning’s power comes from big data, flexible architectures, and non-linear functions that let models represent complex relationships.

- •You learn the course structure: Part 1 covers the foundations—neural networks, backpropagation, optimization, and regularization. Part 2 focuses on convolutional neural networks (CNNs) for images, including object detection and segmentation. Part 3 moves to recurrent neural networks (RNNs) for sequences, such as language, speech, and time series.

- •Logistics are clear and demanding: four programming homeworks, a midterm on theory, and a final project that applies deep learning to a real problem. Grading is 40% homeworks, 20% midterm, and 40% final project. You’ll use Python and TensorFlow, get help via office hours/sections, and a Q&A forum.

- •The lecture introduces the single-layer perceptron: compute z = w^T x + b, then apply an activation function a = g(z). Activation functions like sigmoid, ReLU, and tanh make the model non-linear, which is essential for solving real-world problems. Without them, the model is just a straight-line (linear) predictor.

- •Training is done with gradient descent, which iteratively nudges weights and bias downhill on the error surface. The update rules are w := w − α ∂E/∂w and b := b − α ∂E/∂b, where α is the learning rate controlling step size. This method improves the model by reducing the gap between predictions and true labels.

- •Multi-Layer Perceptrons (MLPs) stack layers of neurons: input, hidden, and output layers. Each layer computes z^(l) = W^(l) a^(l−1) + b^(l) and then a^(l) = g(z^(l)); stacking creates non-linear decision boundaries. With hidden layers, the network can learn complex, hierarchical representations.

Why This Lecture Matters

This lecture is essential for anyone starting deep learning—students, engineers pivoting into AI, data scientists expanding from traditional ML, and product builders scoping AI projects. It clarifies what deep learning truly is, why it has surged in impact, and how to approach learning it in a structured, practical way. Understanding perceptrons, activations, gradient descent, and backpropagation gives you the mental toolkit to reason about any neural architecture, from simple MLPs to advanced CNNs and RNNs. In real work, these basics help you debug training failures, choose sensible activations and learning rates, and plan data and compute needs. The course logistics and project emphasis map directly to industry practice: you’ll build end-to-end systems, evaluate results, and iterate, mirroring applied AI development. In today’s industry—where AI powers vision in cars and hospitals, language in assistants and support, and speech in dictation and accessibility—knowing these fundamentals opens doors to impactful roles and research. Starting with a rock-solid foundation keeps you from treating deep learning as a black box and enables you to design, train, and deploy models with confidence and accountability.

Lecture Summary

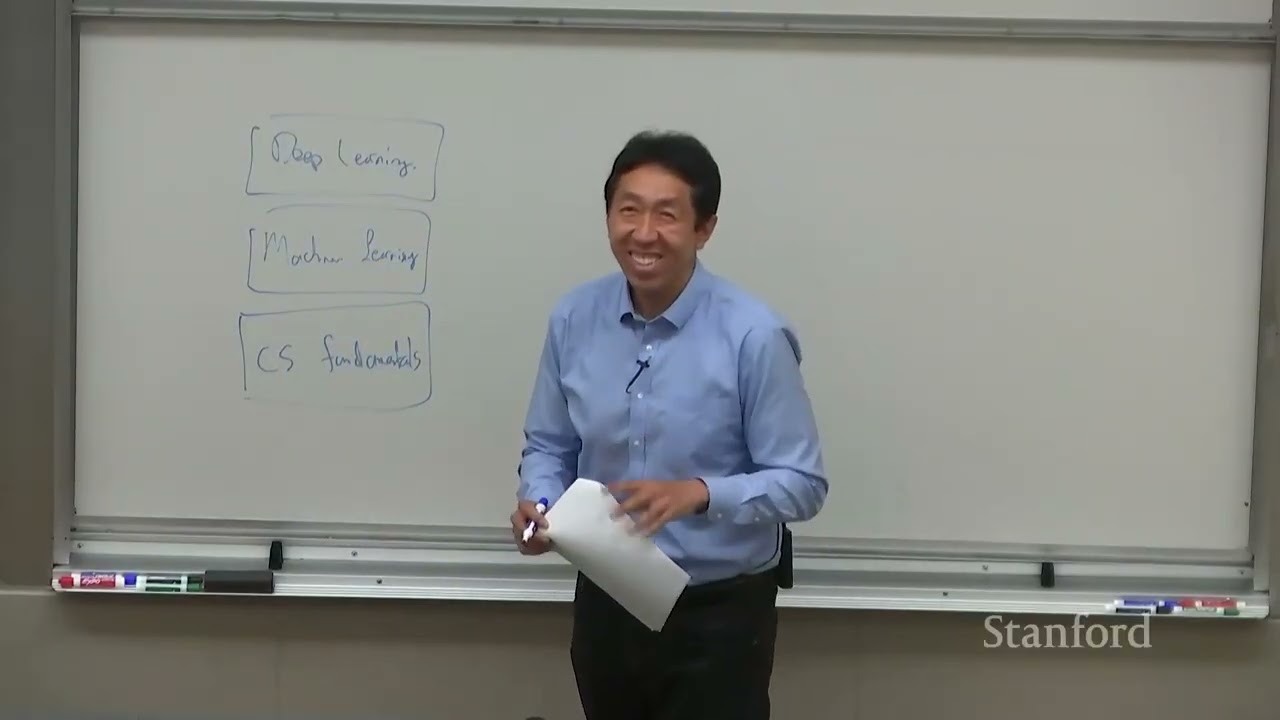

Tap terms for definitions01Overview

This opening lecture of Stanford CS230, taught by Andrew Ng, introduces deep learning, sets the course roadmap, explains logistics and expectations, and begins the technical foundations with perceptrons, activation functions, gradient descent, multi-layer perceptrons (MLPs), and backpropagation. It starts by defining deep learning as a subset of machine learning that uses neural networks with many layers to learn complex, hierarchical representations from data. A practical intuition is given through images: early layers detect simple edges, later layers detect textures and parts, and deeper layers recognize whole objects. The lecture emphasizes why deep learning has become so powerful: access to large datasets, flexible architectures that can be adapted to many tasks, and the capacity to model non-linear relationships.

The course is structured in three parts. Part 1 builds the foundation: neural networks, backpropagation (the algorithm to compute gradients efficiently), optimization algorithms (like gradient descent variants), and regularization techniques (methods to reduce overfitting). Part 2 focuses on convolutional neural networks (CNNs) for visual tasks including image recognition, object detection, and segmentation. Part 3 introduces recurrent neural networks (RNNs) to handle sequential data such as natural language, speech, and time series. Throughout, the course balances theory and hands-on practice, using Python and TensorFlow as the main tools. Students are encouraged to brush up on these technologies with provided tutorials and external resources.

Logistics are set with clear expectations: the class is demanding and requires consistent effort. There are four programming homeworks providing hands-on experience, a midterm exam testing theoretical understanding, and a final project where students apply deep learning to a real-world problem. Grading weights are 40% for homeworks, 20% for the midterm, and 40% for the final project. Support includes section leading meetings/office hours for one-on-one help, plus an online Q&A forum for community-driven assistance.

The technical portion of the lecture begins with the single-layer perceptron, the simplest neural network building block. Each neuron computes a weighted sum of inputs plus a bias, passes it through a non-linear activation function, and produces an activation (output). Common activation functions include sigmoid (outputs between 0 and 1), ReLU (outputs 0 for negative inputs and the input itself for positive inputs), and tanh (outputs between −1 and 1). The lecture explains why activation functions are essential: they introduce non-linearity, allowing the model to learn beyond straight-line relationships. Training a perceptron involves finding weights and bias that minimize prediction error, using gradient descent to iteratively update parameters in the direction that most reduces the error.

The lecture then generalizes to multi-layer perceptrons (MLPs), which stack multiple layers: input, one or more hidden layers, and an output layer. Each layer transforms the previous layer’s activations using its own weights, bias, and activation function. The crucial difference from a single-layer perceptron is that hidden layers allow the network to represent highly non-linear decision boundaries and hierarchical abstractions of data. Finally, the backpropagation algorithm is introduced as the key method to compute gradients for all weights and biases efficiently by doing a forward pass (compute activations) and a backward pass (propagate errors and compute derivatives).

By the end of this lecture, you know what deep learning is, where it’s used (image recognition, NLP, speech recognition), why it has become impactful, and how this course will build your knowledge step-by-step. You also gain the foundational equations of neurons and the training loop (forward computation and gradient-based updates), preparing you for deeper dives into optimization, regularization, CNNs, and RNNs in the coming sessions. The lecture’s core message is that deep learning’s strength lies in learning layered, non-linear representations from data, and that with disciplined study and practice, you can master these tools to solve meaningful real-world problems.

Key Takeaways

- ✓Start with the neuron mental model: weighted sum plus bias, then a non-linear activation. Always write out z = w^T x + b and a = g(z) when debugging. Check if your inputs are sensible and if activations are saturating. This simple equation explains most training behaviors you’ll see.

- ✓Non-linearity is not optional—choose activations thoughtfully. ReLU is a strong default for hidden layers due to efficiency and gradient flow. Use sigmoid or tanh only when their output ranges are needed or helpful. Watch for saturation with sigmoid/tanh and dead neurons with ReLU.

- ✓Tune the learning rate early. If loss explodes, lower α; if loss barely moves, increase it modestly. Plot loss across iterations to see trends clearly. A good α makes training stable and fast.

- ✓Stack layers to learn hierarchy, but keep it reasonable. More layers increase capacity but also training difficulty and overfitting risk. Start shallow and scale complexity as needed. Monitor validation performance to guide depth and width.

- ✓Use forward/backward pass thinking for every bug. If outputs look wrong, inspect activations layer by layer (forward). If learning stalls, verify gradients exist and have reasonable magnitudes (backward). This structured approach quickly isolates issues.

- ✓Plan your time—this course is demanding by design. Break homeworks into phases: data prep, model build, training, evaluation, and report. Seek help early at office hours or the forum when blocked. Small daily progress beats last-minute marathons.

- ✓Build a minimal working model first. Implement the simplest perceptron or tiny MLP to verify your pipeline. Once it learns something, scale up architecture and training time. This reduces wasted effort debugging big models.

Glossary

Deep Learning

A kind of machine learning that uses many layers of simple math units (neurons) to learn complex patterns. Each layer turns inputs into slightly more useful forms, step by step. By stacking many layers, the system can handle hard tasks like seeing objects in images or understanding speech. It learns from examples instead of being told exact rules. More data usually helps it get better.

Machine Learning

A way for computers to learn from data rather than by following only hand-written rules. The computer looks for patterns linking inputs to outputs. Over time, it adjusts its internal settings to make better predictions. This helps solve problems that are too tricky to program by hand.

Neural Network

A network of simple units called neurons that each do a small calculation and pass results forward. Many neurons and layers together can learn tough patterns. The network adjusts weights and biases to improve. Non-linear activations let it learn curves, not just straight lines.

Perceptron

The simplest type of neuron that sums inputs times weights, adds a bias, and applies an activation function. It’s a tiny decision-maker. By itself it can only draw straight-line decisions. It is the building block for deeper networks.